Article Summary

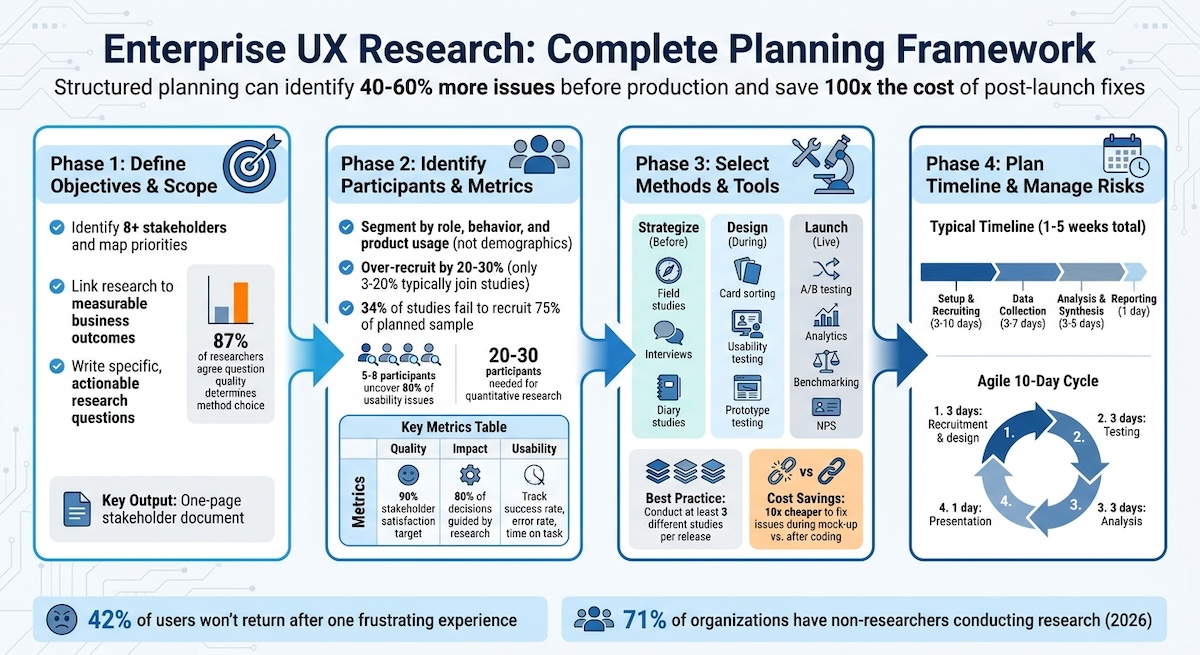

Fixing usability issues during the mock-up stage costs approximately 10 times less than addressing them after coding, and problems identified post-launch can cost up to 100 times more to resolve than those caught during the research phase. Teams using structured research checklists identify 40 to 60% more issues before production compared to those relying on ad-hoc reviews. In 2026, 71% of organizations report having people who conduct research who are not dedicated researchers, making structured planning the primary mechanism for maintaining methodological rigor at scale.

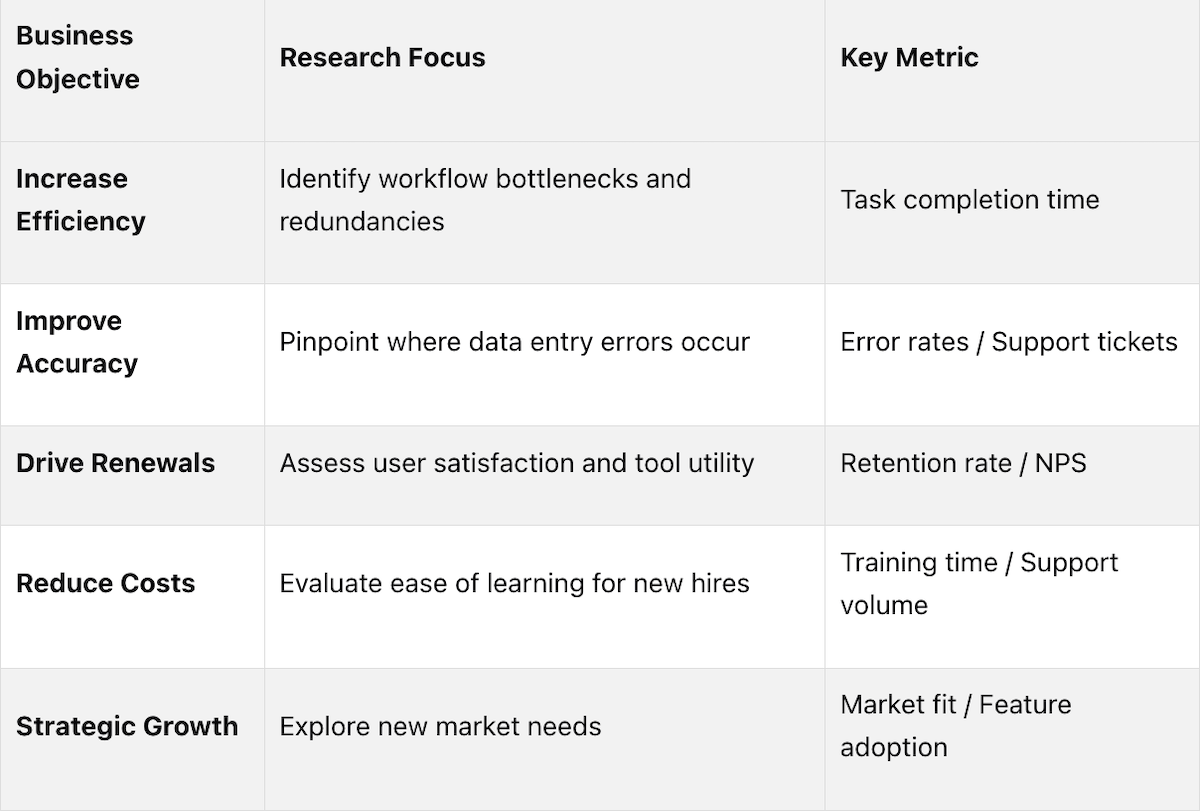

Research objectives should be linked directly to the metrics the business already tracks rather than to research-specific outputs alone. Efficiency goals map to task completion time. Accuracy goals map to error rates and support ticket volumes. Retention goals map to NPS scores and renewal rates. Cost reduction goals map to training time and support volume. This alignment ensures that research findings connect to decisions leadership is already equipped to make, rather than producing insights that exist in isolation from business priorities.

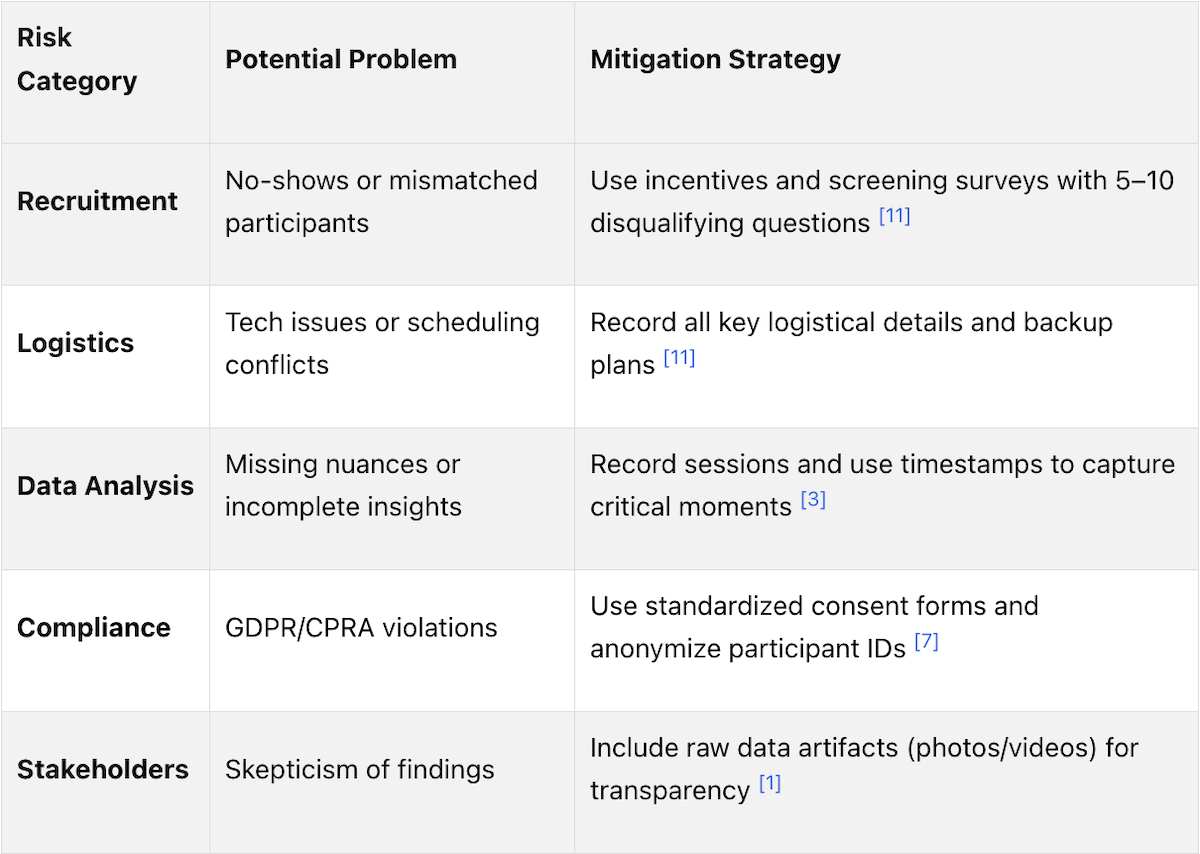

Only 3% to 20% of eligible participants typically agree to join a research study, and 34% of studies fail to recruit even 75% of their planned sample size. Enterprise research compounds this challenge because the person purchasing software is frequently not the person using it daily, meaning recruitment criteria must be built around behavioral attributes, job responsibilities, and usage patterns rather than titles or demographics. Over-recruiting by 20 to 30% for qualitative studies and 10% for quantitative studies, combined with screening questions that verify task-level experience, are the primary risk mitigation strategies.

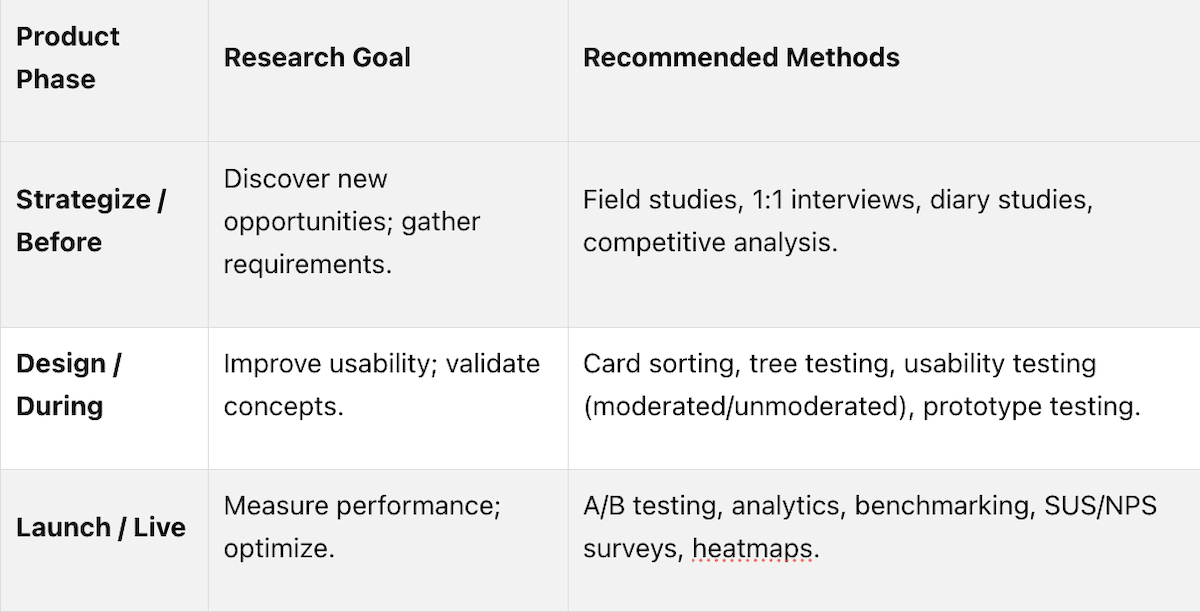

Before design begins, generative methods including field studies, one-on-one interviews, and diary studies uncover opportunities and gather requirements. During the design phase, formative methods including card sorting, tree testing, and moderated usability testing refine workflows and validate concepts. After launch, summative methods including A/B testing, analytics, benchmarking, and NPS surveys measure performance and inform optimization. Experienced researchers recommend conducting at least three different studies per product release, combining qualitative methods to understand why users behave a certain way with quantitative methods to measure how many are affected.

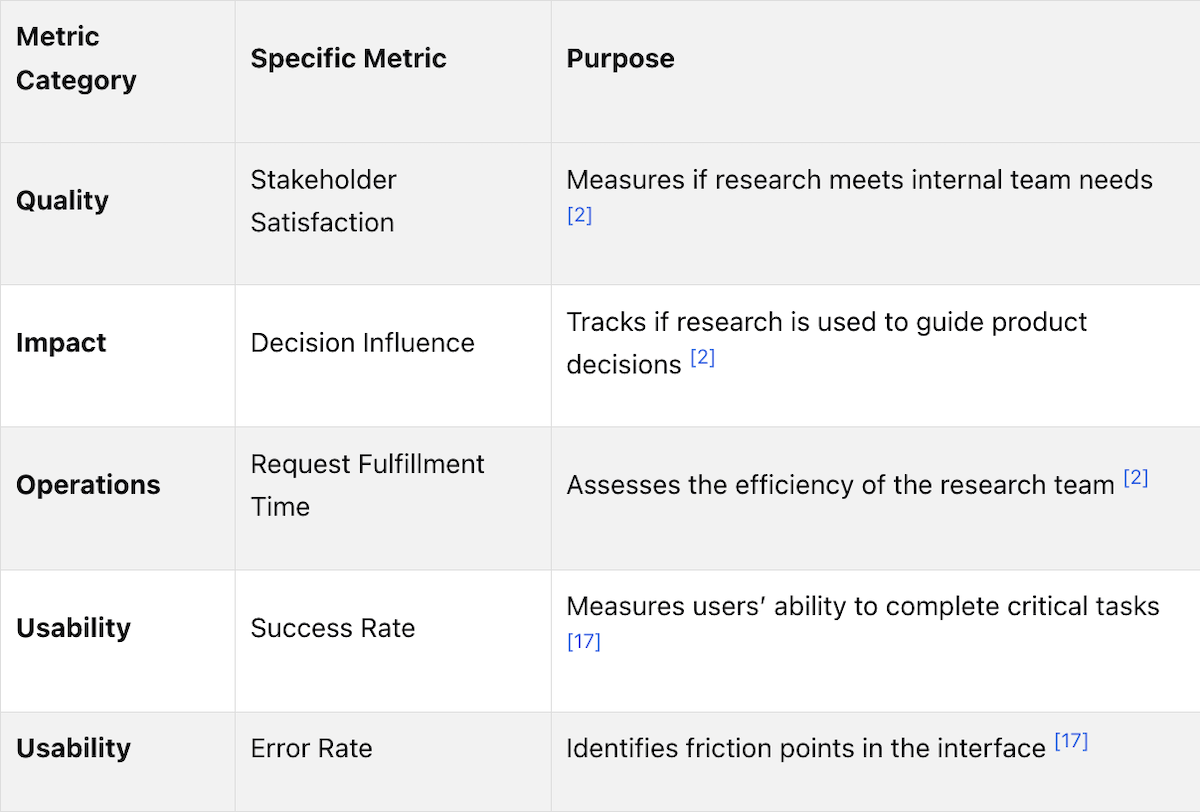

Behavioral metrics including task success rates, time on task, and error rates measure usability performance. Impact metrics including the percentage of product decisions influenced by user insights target 80% in mature research operations. Stakeholder satisfaction with research quality targets a 90% satisfaction rate in systematized environments. Operational metrics including average time from research request to delivered insights measure team efficiency. In mature enterprise settings, the goal is for 80% of teams to be capable of conducting basic research independently, with professional researchers guiding rather than executing all studies.